Introduction

In my previous blog Dockerising our apps, I demonstrated the power of Docker and how we used it internally at Valiantys to start and populate our 1-minute demo project. In this blog, we’re going a little further, deploying our Docker containers to AWS. There are three methods I am going to describe briefly: the traditional Amazon EC2, the ECS Service and the EBS. Today we’ll focus more on the ECS, as this is the service specifically designed to handle container deployments.

Traditional Amazon EC2

The Amazon elastic compute cloud (EC2) is a web service from Amazon designed to provide services on the compute cloud. What that means is that we can start a predefined/configured instance of a machine on the cloud and work with it. Because it’s a machine just as powerful as your physical laptop, we can leverage it to do pretty much any computing tasks. This means that using this method, will can do the following:

- Spin up a Linux box on the cloud

- SSH to the instance

- Install Git and Docker with the corresponding Docker-compose

- Check out our source code

- Navigate to the root directory and execute the command docker-compose up –build, as in the previous blog

This is pretty straightforward method and should work for trivial cases. It isn’t robust, however, or scalable in the sense that you cannot create multiple instances and load balanced them. Also, there is no monitoring of the instance. AWS has a quick guide to follow on this method here.

ECS Service

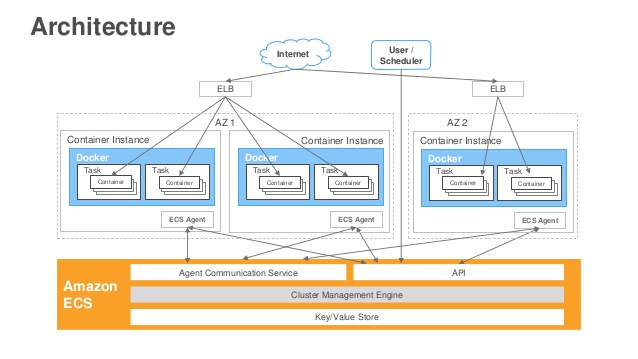

This stands for Amazon EC2 Container Service. This is a new service introduced to free up users from the stress of having to manually install, operate and scale clusters of EC2 instances. It provides a set of APIs that can be accessed to launch and stop Docker and query the status of clusters. The following diagram depicts more clearly what ECS is:

The ECS is made up of a farm of EC2 instances that have Docker installed, as well as an ECS Agent. The ECS agent is the component that controls the container and lifecycle events such as starting and stoping the container. It also connects these special instances to the underlying infrastructure of AWS, such as the volumes, security groups and IAM roles.

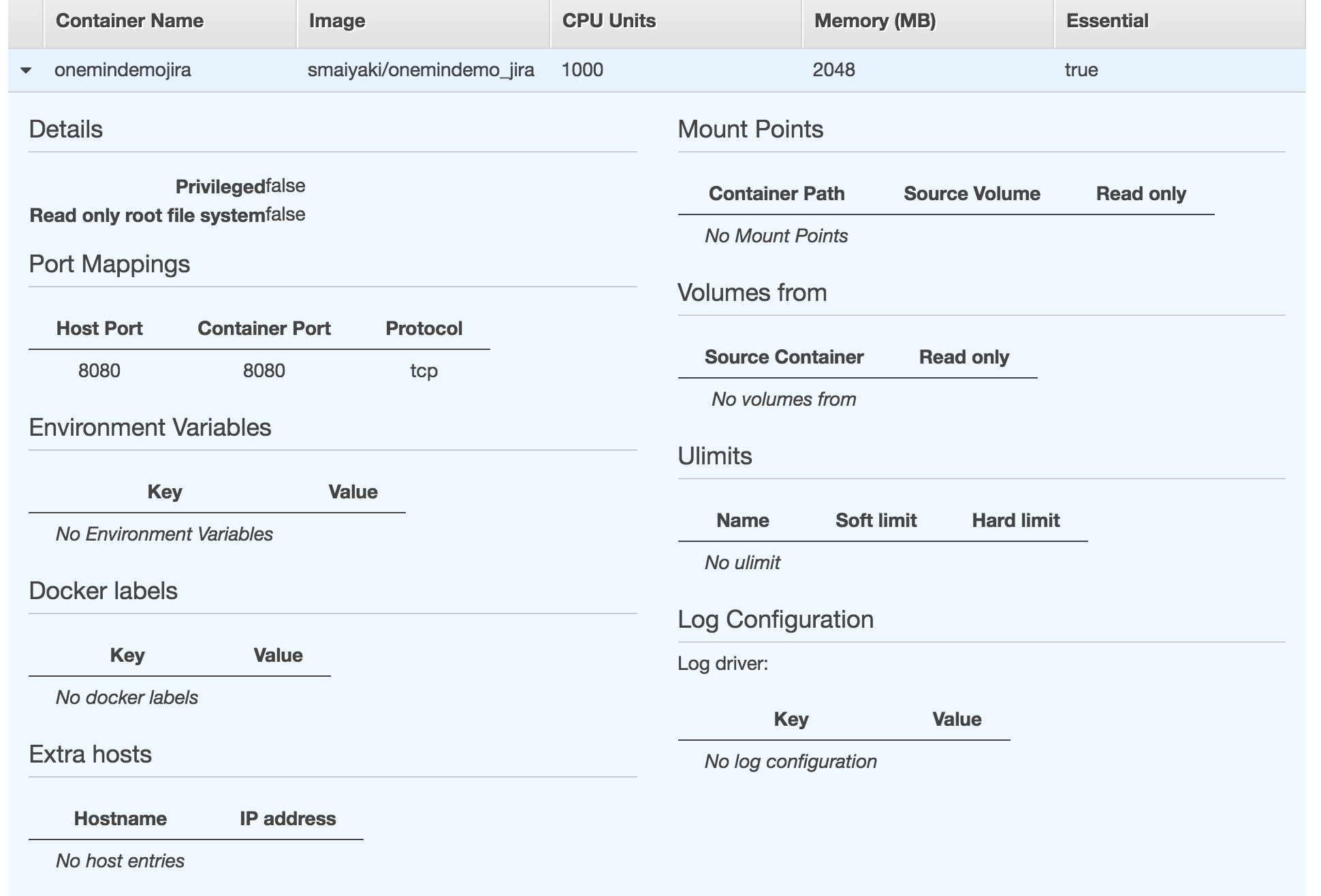

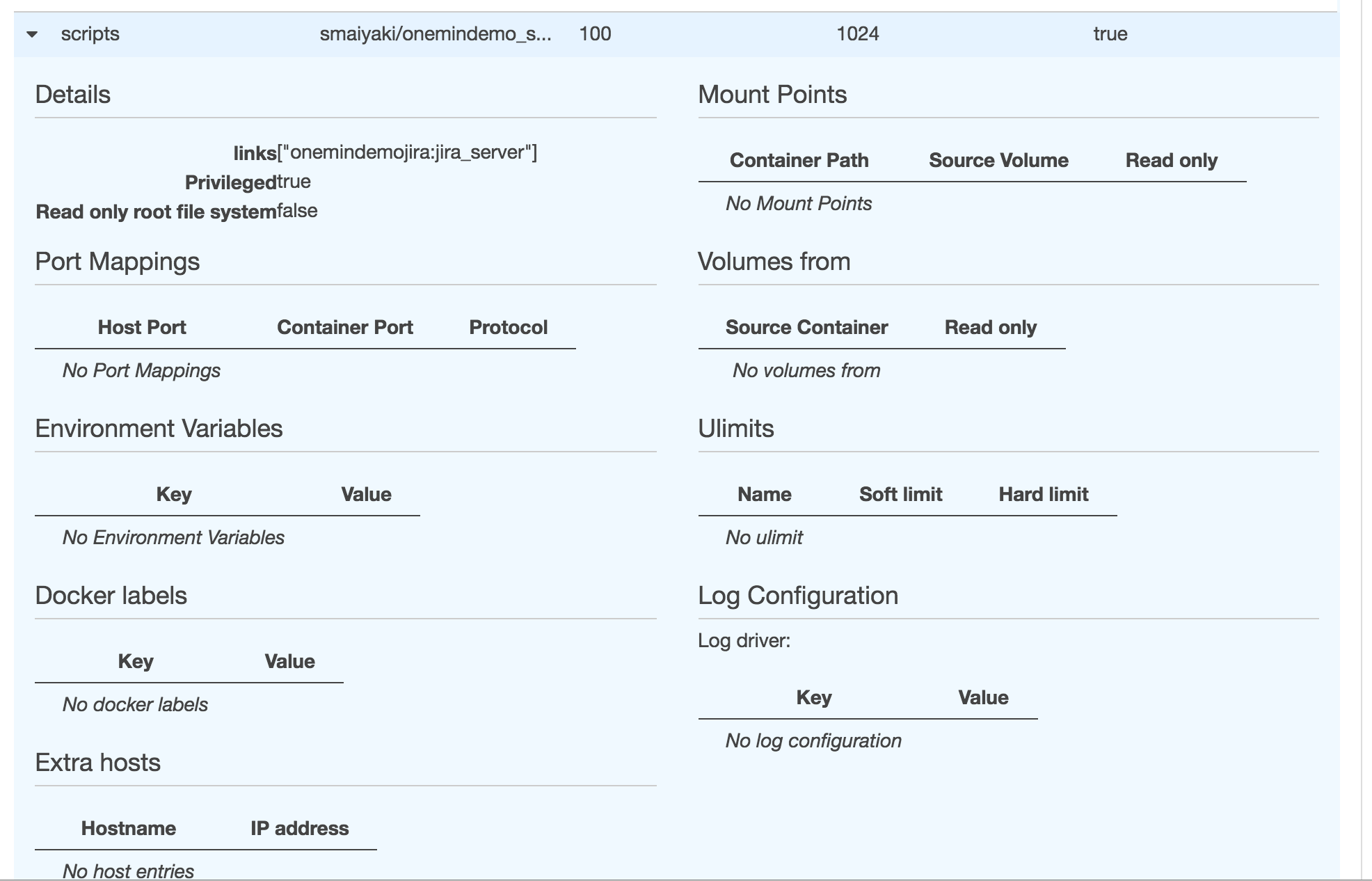

Now each of the instances has a collection of tasks that define the containers we will want to run, as well as their properties such as the port mappings, memory allocation and links. The tasks are derived from the compose file created earlier when running Docker locally on the development environment. We will see later how ECS docker-composed file can be created from the original development version. At this point, just know that the tasks are deciphered from the compose files.

The tasks we have defined are wrapped around a service. The service is in charge of running a predefined number of instances of a task.

Prerequisites

- Create an account in AWS. You will want to restrict access to the whole bunch of services in AWS for security reasons. I recommended that you create an IAM user separate from your admin account and grant the user full access to the ECS service only. Keep the access and secret keys for that user in a secure place – you will need it!

- Install the AWS CLI; a unified tool for managing AWS services

- Install the AWS ECS CLI; a tool for managing the ECS Service

Configure the environment

On your command line, run the command below to install your access credentials that will be used to communicate with the AWS.

aws configureYou will be asked a series of questions, including but not limited to the access key and the secret access key as shown below.

AWS Access Key ID [****************4S7Q]:

AWS Secret Access Key [****************hfw0]:

Default region name [us-east-1]:

Default output format [None]:The values of the parameters above constitute what we call the user profile. The details are stored in the ~/.aws/config file with a name Default. If you want to call your profile a different name, please rename it from the file. At this point, you will be able to communicate with the AWS console from command-line.

Steps

-

Create the cluster

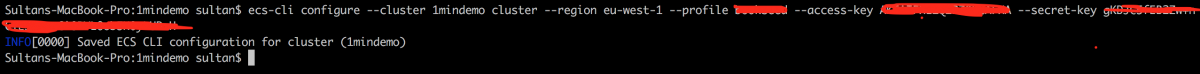

This can be done by running a parameterised command like below:

ecs-cli configure –cluster 1mindemo cluster –region eu-west-1 –profile XXXXXXX –access-key XXXXXXXX –secret-key XXXXXXXXXXXThe profile is read from the ~/.AWS/config file. This configuration of the ecs-cli is stored in the ~/.ecs / config file.

2. Start the Cluster

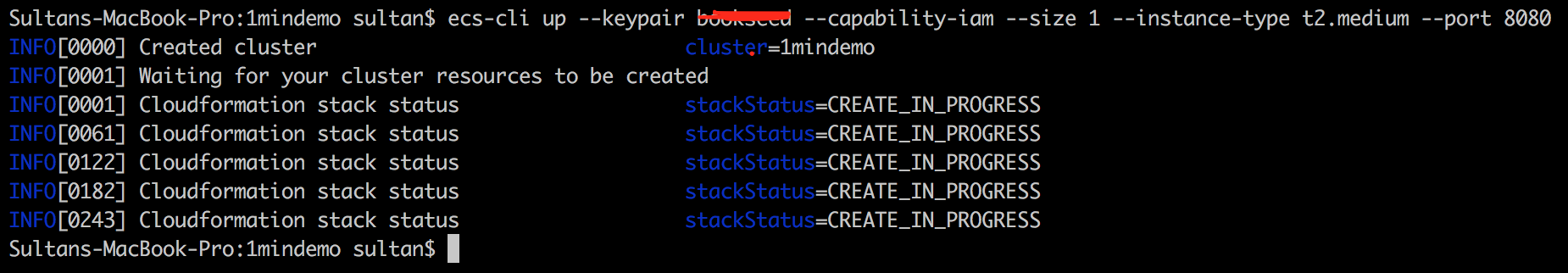

The cluster we created earlier has not been used yet – the configuration is just stored locally. Starting the cluster will activate the cluster and start the underlying EC2 instances .

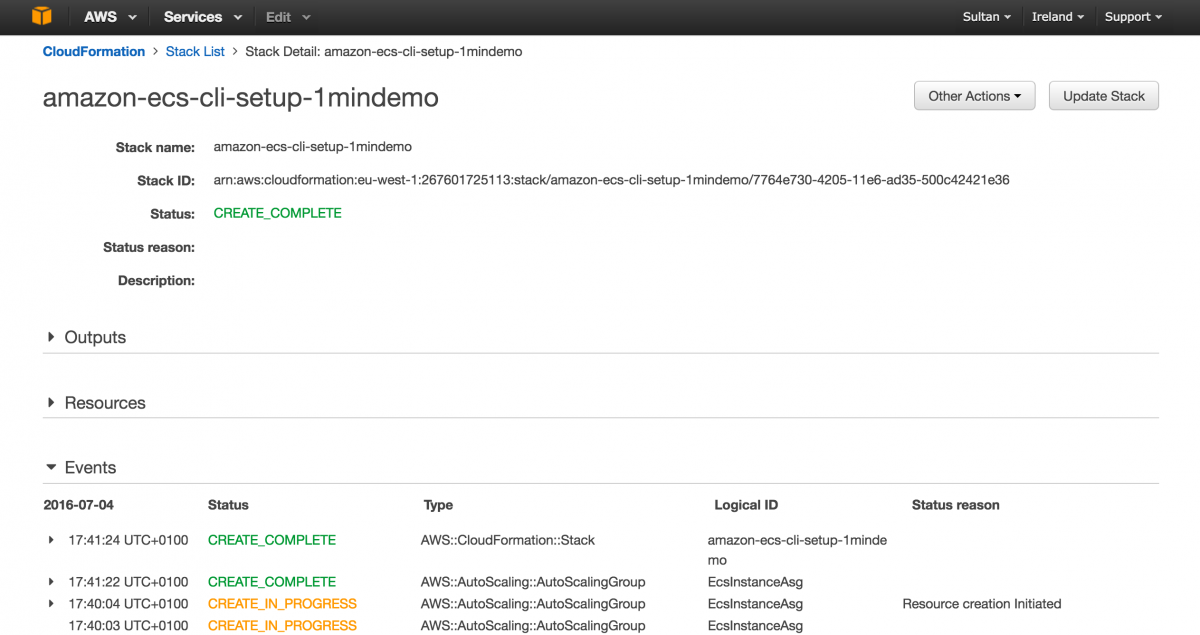

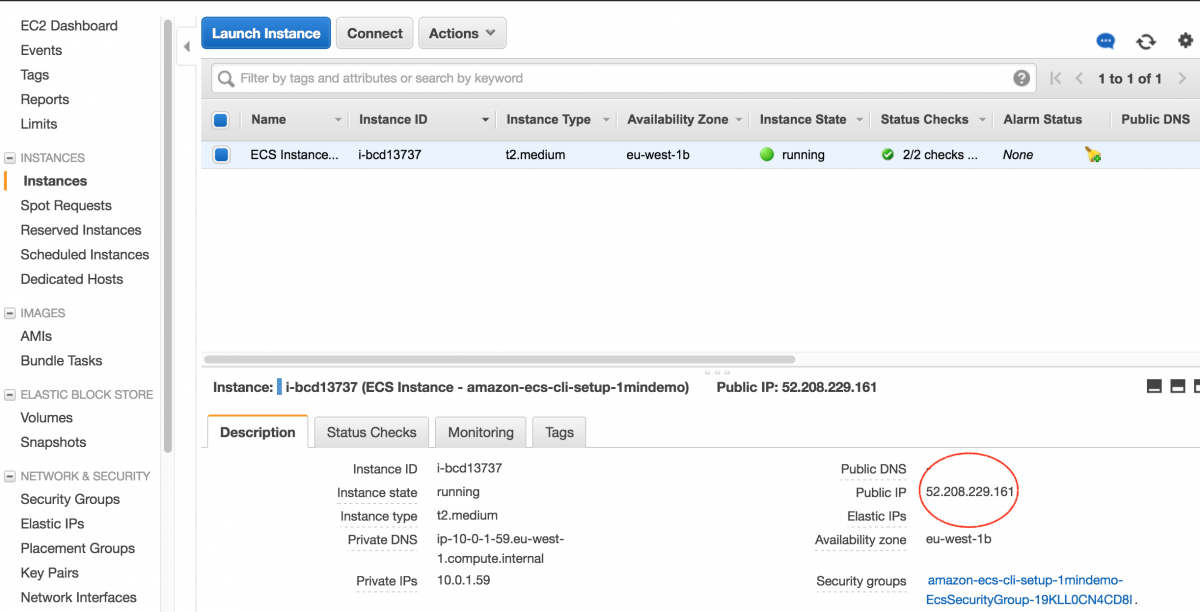

ecs-cli up –keypair XXXXXXX –capability-iam –size 1 –instance-type t2.medium –port 8080Notice that we have opened port 8080 for JIRA. While creating the instance, AWS will also create a security group on the fly with port 8080 opened. If this is not specified, it will use the default port 80. Also notice that we have an instance type of t2.medium, and have opted to start only a single instance with the option –size. This will do two things: create a cloudformation before ultimately starting the EC2 instance. Cloud formation is another free service from AWS that enables users to manage the infrastructure.

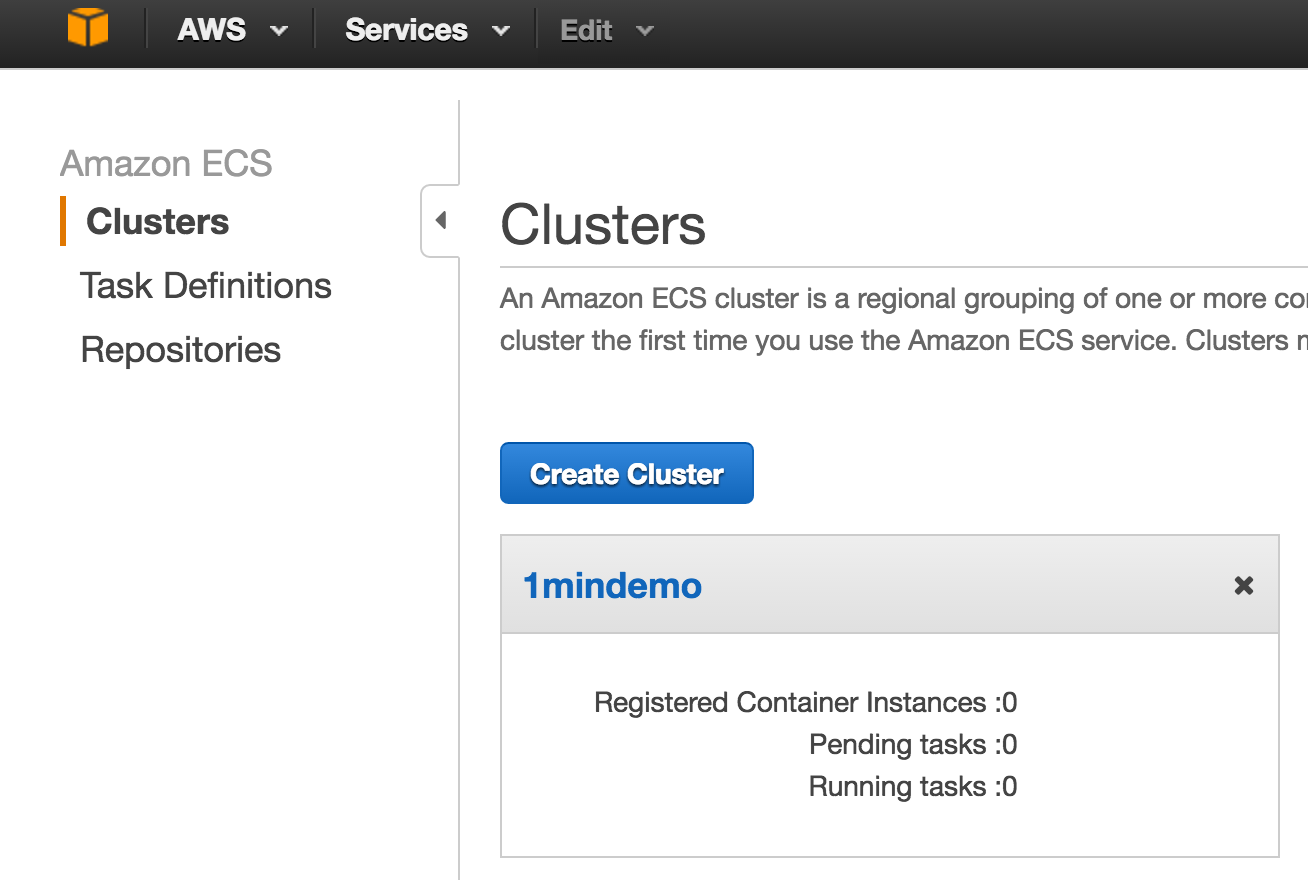

Now you can see that the cluster has been started.

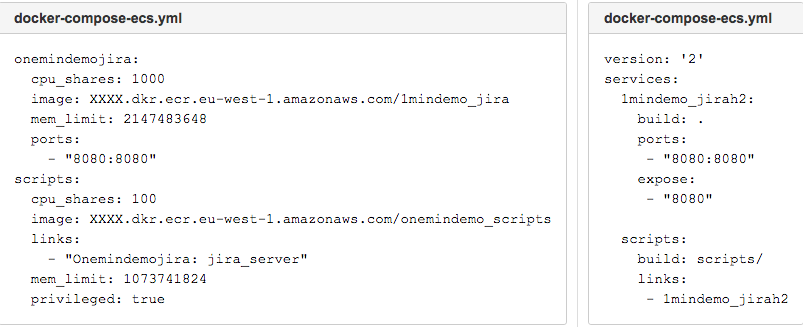

3. Create an AWS compatible docker-compose file

If you remember, we have created a Docker-compose file to wire our two containers together and specify the order in which the containers will be started. The AWS Docker-compose file version comprises of this wiring, but with some little limitations. The most prominent restriction is that AWS does not support version two of the docker-compose standard. This affects the format of the yaml file, but without any effect on the functionalities. You can read more about the versioning here .

Another difference between the files is that the AWS version has whitelisted only a few parameters. For example, the expose directive is not supported in AWS – probably because this is handled by the security group in EC2. Again, the build directive is not supported. This has been removed in favour of an image. This makes sense since we are not deploying locally, but to a cloud server. This means that at this moment, we will have to push our container configurations to Docker hub or other registry. I am going to show how we can upload to both Docker hub and Amazon ECR.

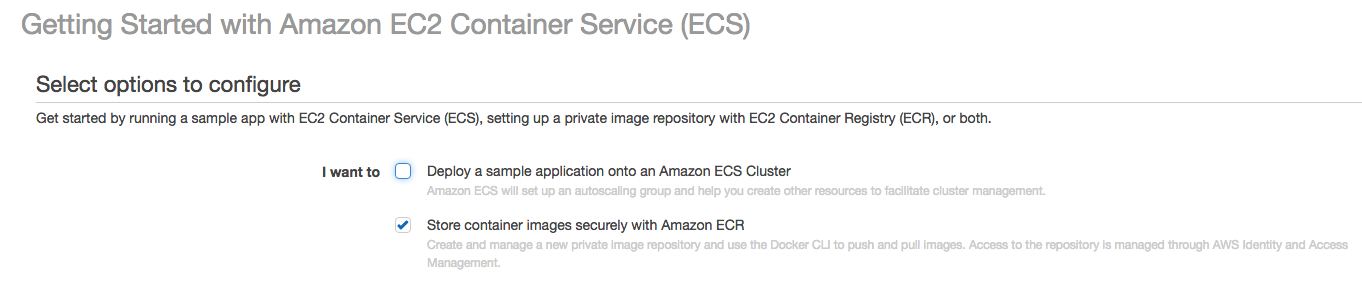

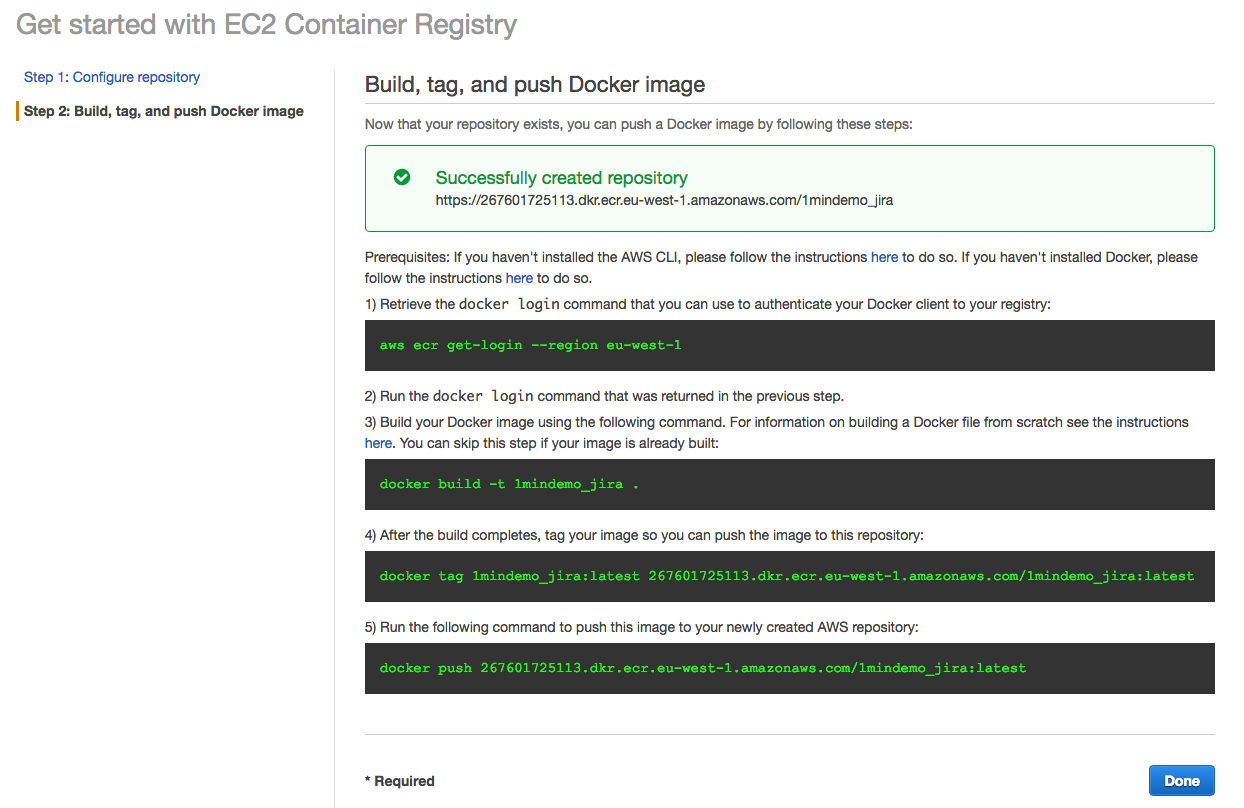

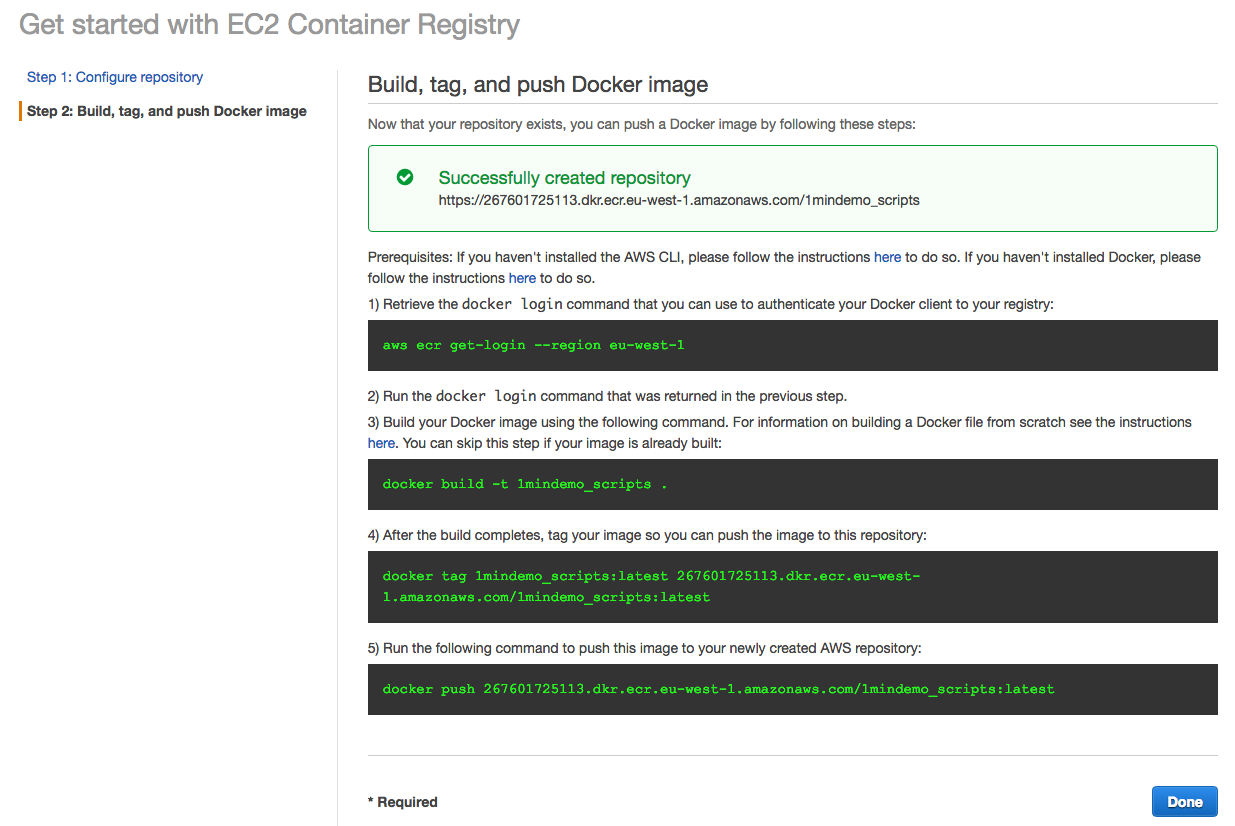

Amazon ECR: on the dashboard, choose the option to store the container and images. A series of commands will be provided for you to commit your container and upload.

Docker Hub:

docker build smaiyaki -t / onemindemo_jira.docker push smaiyaki / onemindemo_jiraNow we can cd to our script folder and do Same:

docker build smaiyaki -t / onemindemo_scripts.docker push smaiyaki / onemindemo_scriptsNow let;s have a look at our new compose file. For brevity, this is now named docker-compose-ecs.yml

For a complete list of parameters supported, you can review these AWS supported guidelines for docker-made.

4. Start the Service

ecs-cli compose –file docker-compose-ecs.yml service upIt will go through the compose file to auto generate the task and start the containers within the EC2 instance that was created earlier in step two.

JIRA is now available from the public address of the EC2 instance

In our case, JIRA pre populated with data will be available at http://52.208.229.161:8080/

Clean up

Clean up involves two steps. Firstly, we need to remove the service which successfully kills the containers, and then we will need to delete the cluster which goes down together with the EC2 instance(s). You can do that by running these commands:

ecs-cli compose –file docker-compose-ecs.yml service rmecs-cli down –forceElastic Beanstalk

Elastic beanstalk (EBS) is another service from AWS that allows users to leverage services towards deploying and managing applications in the AWS cloud. When the EBS service is used to deploy applications behind the hood, many services like the EC2,S3, SNS, cloudwatch and RDS are started and work in collaboration. EBS is meant to provide users with a single port to utilise the full power of AWS.

There are many tutorials on how to configure and deploy common applications developed in Java, Python, php, Go, Ruby and even custom applications. Typically, EBS is used to deploy web applications, and all the examples from the official site are geared towards it.

Ideally, when an EBS environment is started, a cloudFormation is started with a template that defines other services that will be started – like the VPC, load balancer, the IAM for user roles, security groups and instance types. Another service that must be started is the S3. AWS will use it temporarily, stored on your application, before the instance is ready for deployment.

S3 is also used by EBS to store a version of your app on every deployment. Since EBS is meant for deploying web applications, the app is accessible on a URL like <environment_name>.<region>.elasticbeanstalk.com. This is made possible by aliasing the environments CNAME(URL) in the Route 53 service. If your application requires a database, the RDS service is started for you. In a similar way, if your app requires a queuing service, the SQS will be started automatically for you by EBS. So basically, services are based on your design – the sky’s the limit with EBS!

Recently, Docker has been added to the list of applications that can be deployed. Not only that, it also supports multicontainer docker deployment. As you might have already guessed, when using the EBS to deploy a Docker application, ECS is started for you behind the scenes. For more detail, you can have a look here.

Summary

We have seen how our 1-minute Docker project can be deployed to AWS using three available methods. Of the available routes, we focused mainly on the service created specifically for Docker, and gave highlights of the other methods. As we have seen, the traditional method is not meant for production environments but for trials only, as it lack some management and metrics on the status of the instance. This leaves us with the option of using either ECS or EBS. There are lots of opinions around the two services. It is all about having a control of your infrastructure, which is why we decided to follow the part of controlling our infrastructure and to learn more about the container service.

What’s next?

Now that we’ve created our Docker containers and showed how we can use our image to deploy to AWS, the next step will be to see how we can add automation with bamboo. Stay tuned to see how we do it!